Jingzhi Bao

My research focuses on multi-modal understanding/generation and 3D foundation models. I am currently working on technical scaling of foundation models to production-ready standards.

Education

University of Illinois Urbana-Champaign (UIUC)

Master in Computer ScienceSep. 2025 - Present

The Chinese University of Hong Kong, Shenzhen

B.Eng. in Computer Science and EngineeringSep. 2021 - July 2025

Nanyang Technological University (NTU)

Exchange StudentAug. 2023 - Dec. 2023

Experience

ByteDance

Research InternMay 2026 - Present

Lightspeed Studios, Tencent Games

Research InternFeb. 2025 - Aug. 2025

MeshyAI

Collaboration2025

University of California, Merced

Visiting StudentJune 2024 - Dec. 2024

Publications

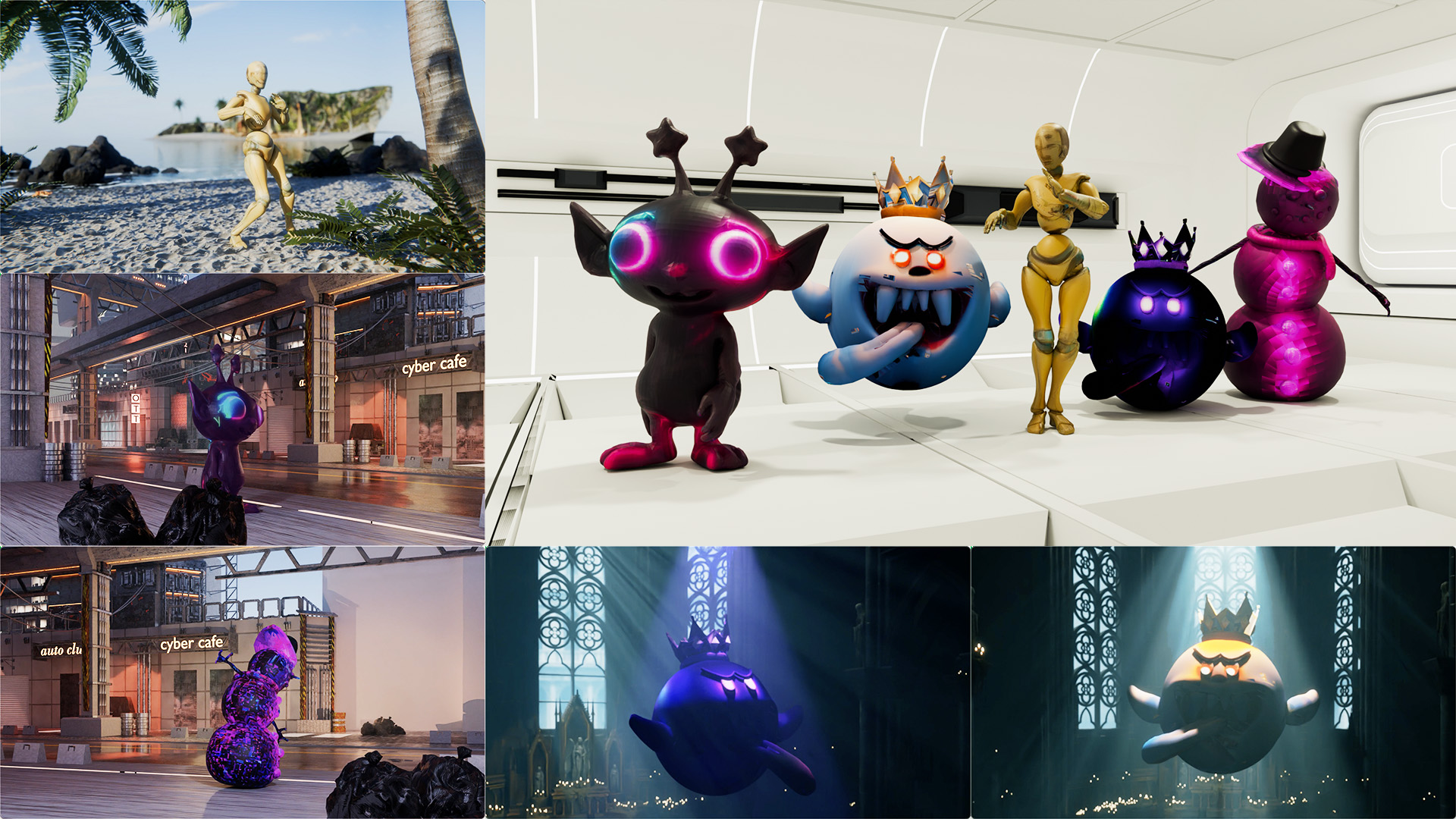

Toward Next-Generation 3D Texture Generation

Meshy Texture Team

Technical Report

The best texture generation model delivering exceptionally accurate, photorealistic, professional-level PBR textures + normal maps, with support for multi-view inputs. Lead the project across data filtering, pipeline infra optim., model training/quantization/distillation, and arena evaluation.

CaliTex: Geometry-Calibrated Attention for View-Coherent 3D Texture Generation

Chenyu Liu, Hongze Chen, Jingzhi Bao, Lingting Zhu, Runze Zhang, Weikai Chen, Zeyu Hu, Yingda Yin, Keyang Luo, Xin Wang

IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2026

A framework of geometry-calibrated attention (geo-aligned attention + condition-routed attention) achieves strong texture alignment with 3D structure. CaliTex produces cross-view consistent textures outperforming both open-source and commercial methods (Hunyuan3D 2.1/3.0).

LumiTex: Towards High-Fidelity PBR Texture Generation with Illumination Context

Jingzhi Bao*, Hongze Chen*, Lingting Zhu, Chenyu Liu, Runze Zhang, Keyang Luo, Zeyu Hu, Weikai Chen, Yingda Yin, Xin Wang, Zehong Lin, Jun Zhang, Xiaoguang Han

International Conference on Learning Representations (ICLR), 2026

A product-ready level PBR generation method that surpasses SoTA open-source and commercial methods (Hunyuan3D 2.1, Meshy-5, TripoAI v2.5).

Tex4D: Zero-shot 4D Scene Texturing with Video Diffusion Models

Jingzhi Bao, Xueting Li, Ming-Hsuan Yang

arXiv preprint, 2024

We present Tex4D, a zero-shot framework that fuses 3D geometric priors from mesh sequences with the generative power of video diffusion models to produce multi-view, temporally consistent 4D textures.

Photometric Inverse Rendering: Shading Cues Modeling and Reflectance Regularization

Jingzhi Bao, Guanying Chen, Shuguang Cui

International Conference on 3D Vision (3DV), 2025

A differentiable inverse rendering framework that jointly optimizes lighting and materials for photometric images, achieving accurate self-shadow handling and inter-reflection estimation. DINO-based feature distillation delivers more consistent surface reflectance decomposition.